There is a version of “AI adoption” that most companies have already completed. They added a chatbot to their website. They connected ChatGPT to their Slack. They asked the marketing team to use AI for first drafts.

That is not what this article is about.

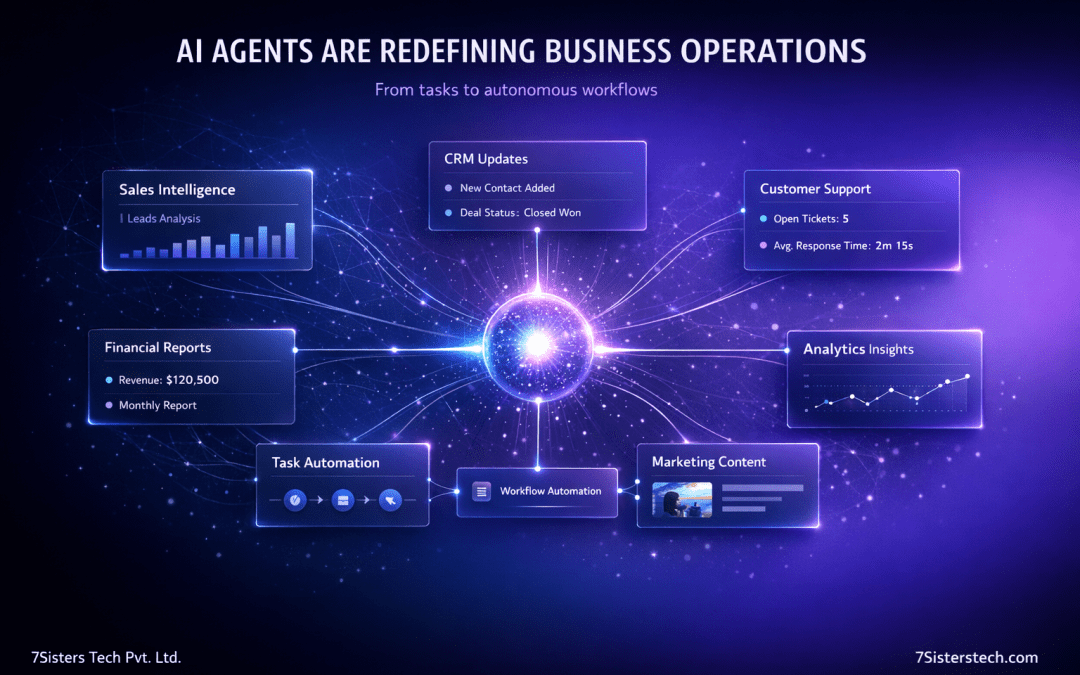

What is happening now – quietly, inside the companies that will define the next decade of competitive advantage, is fundamentally different. AI agents are not tools that assist humans with tasks. They are systems that complete tasks: perceiving a situation, reasoning through it, taking a sequence of actions, and delivering an outcome. Unsupervised. Across your entire business stack.

For founders, CTOs, and operations leaders evaluating where AI actually fits in their strategy, understanding the distinction between AI assistants and AI agents is not a technical nuance. It is a strategic imperative.

What an AI agent actually is – and what it is not

The term “AI agent” has been stretched beyond recognition by vendors eager to attach it to everything. A clear definition matters before any strategic discussion can happen.

An AI agent is a system that can:

- Perceive inputs from its environment (emails, databases, APIs, documents, web pages)

- Reason about what needs to happen next

- Select and use tools to take action (send an email, update a CRM, write code, call an external API)

- Evaluate results and course-correct

- Operate across multiple steps without a human prompt at each stage

What an AI agent is not: a chatbot that answers questions, a template-filling assistant, or a rule-based automation that follows a fixed script. Those are valuable – but they are fundamentally reactive. An agent is proactive and adaptive.

The practical distinction: a chatbot tells your sales team which leads look promising. An AI agent researches each lead, drafts a personalised outreach sequence, schedules follow-ups, logs everything in your CRM, and flags the three accounts most likely to convert – without being asked.

That is not a marginal productivity improvement. That is a structural change in how work gets done.

Why 2026 is the inflection point – not 2023 or 2024

The concept of AI agents is not new. What has changed, rapidly, is the maturity of three converging conditions that make them practically deployable for real businesses.

Reasoning quality crossed a threshold. The large language models powering agents have become capable enough to handle ambiguous, multi-step tasks without producing nonsense at every other step. The error rate has dropped to a level where agents are genuinely useful in production – not just impressive in demos.

The tool ecosystem matured. Agents need to connect to the real world. The emergence of standardised integration protocols, pre-built connectors to major business software, and reliable function-calling capabilities in LLMs means agents can now operate across the software your business already uses – HubSpot, Salesforce, Notion, Jira, Stripe, Google Workspace — without months of custom engineering.

Cost economics shifted. Running an AI agent in 2023 was expensive enough to make broad deployment impractical. Inference costs have fallen dramatically. Organisations can now run agents at scale without the economics making every use case look marginal.

The businesses asking “should we look at AI agents?” today are already a cycle behind. The more useful question is: where in your operations do they create the most durable competitive advantage?

Where AI agents are already delivering measurable ROI

The use cases generating real, quantifiable value for businesses in 2026 are not speculative. They are operational.

Sales intelligence and outbound automation

Sales teams spend a disproportionate amount of their time on tasks that are information-intensive but not relationship-intensive: researching accounts, writing first drafts of outreach, tracking follow-up sequences, updating CRM records. AI agents handle all of this. An agent can monitor a prospect’s news coverage, LinkedIn activity, and funding announcements, then generate a contextually relevant, personalised message — and log every touchpoint automatically.

The commercial impact is not just efficiency. It is the ability to run a thoughtful, research-backed outbound motion at a scale that was previously only available to large sales organisations.

Finance and operational reporting

Monthly close processes, expense categorisation, variance analysis, and investor reporting are structured, data-heavy, and time-consuming. They are also exactly the type of work AI agents handle well. Finance teams using agents report meaningful compression in reporting cycle times – not because the agent replaces financial judgment, but because it eliminates the data-gathering and formatting work that consumed analyst hours.

Customer support resolution

First-line customer support is one of the highest-volume, highest-cost functions in most consumer-facing businesses. AI agents can handle the complete resolution of common issues – refund requests, account changes, technical troubleshooting, subscription modifications – not just the initial response, but the full workflow through to closure. This shifts the support team’s time toward the genuinely complex cases that require human empathy and judgment.

Software development workflows

Engineering teams are using agents to handle code review commentary, generate test suites, write documentation, draft PR descriptions, and triage bug reports. The compounding effect here is significant: when developers spend less time on the operational overhead of software delivery, the velocity of the actual engineering work accelerates.

Content and marketing operations

AI agents can monitor search trends, identify content gaps, draft long-form content, optimise existing pages for updated keywords, repurpose content across formats, and schedule distribution – all as part of a coordinated workflow rather than a series of disconnected AI prompts.

The decision framework – is your business ready?

Not every organisation is at the right maturity level to deploy AI agents effectively. The following questions are the ones that matter most before committing resources:

Do you have clean, accessible data? Agents are only as useful as the information they can access. If your CRM is inconsistently populated, your documents are scattered across a dozen systems, or your APIs are not well-documented, agents will produce unreliable outputs. Data infrastructure is a prerequisite, not an afterthought.

Do your core processes have enough structure to be describable? Agents work best when there is a definable goal, a set of available actions, and a way to evaluate success. Processes that are highly intuitive, deeply interpersonal, or dependent on tacit judgment are poor candidates for early agent deployment.

Can you tolerate a period of lower reliability during calibration? Even well-designed agents make mistakes in their early deployments. Building in the expectation that the first 60 to 90 days involve significant human review and correction is essential. Organisations expecting immediate, hands-off performance will be disappointed.

Strategic insights that most analysis misses

The compounding advantage is in the orchestration layer, not the individual agent

Most of the attention on AI agents focuses on single-agent capabilities – one agent doing one job. The strategic opportunity for businesses is in multi-agent systems: networks of specialised agents that pass work to each other, check each other’s outputs, and operate across an entire business function as a coordinated system.

A marketing function, for instance, could run a research agent that identifies audience trends, a content agent that drafts assets, a quality agent that reviews them against brand guidelines, and a distribution agent that schedules publication. The four agents collectively produce work that no single agent – and arguably no single team working conventionally – could match in speed and consistency.

AI agents redefine the unit economics of scaling

The traditional growth model for most businesses involves a near-linear relationship between headcount and output. AI agents begin to break that relationship. A business that deploys agents effectively can grow its throughput – customer interactions handled, content pieces produced, reports generated, code shipped — without a proportional increase in operational headcount.

This is not primarily a cost-cutting story. It is a strategic leverage story. Capital that would have funded headcount growth can be redirected toward higher-value initiatives: product development, market expansion, customer success.

The differentiation window is shorter than most people assume

The barriers to deploying AI agents are falling. What is genuinely differentiating today – a sophisticated multi-agent workflow for sales intelligence, for example – will be table-stakes in 18 months as more pre-built tooling enters the market. The first-mover advantage in AI agents is real, but it expires faster than most enterprise technology cycles. Companies that treat this as something to “monitor for now” risk arriving when the differentiation opportunity has already closed.

Risks and misconceptions that are costing businesses time and money

Misconception 1: AI agents are a plug-and-play solution.

The vendors selling agent platforms have strong incentives to make deployment sound frictionless. It is not. Integration with existing systems, definition of process workflows, data access configuration, and output validation all require meaningful investment. Businesses that approach agents with a “deploy and walk away” mindset consistently report poor results.

Misconception 2: Agents can replace human judgment on consequential decisions.

The current generation of AI agents is highly capable within well-defined domains and genuinely unreliable in others. Decisions that carry material legal, financial, or reputational consequences – pricing strategy, contract terms, hiring decisions, customer-facing communications in sensitive situations – require human review. Any deployment architecture that removes this review layer is introducing unquantified risk.

Misconception 3: The biggest risk is the agent doing something wrong.

Most organisations focus on output quality as their primary risk concern. The more significant risk is often subtler: an agent that performs adequately but not excellently, over a long period, in a way that no one is actively measuring. Process drift – where an agent’s outputs gradually shift away from what the business actually wants – is harder to detect than a clear failure, and more damaging over time.

Misconception 4: AI agents require a large engineering team to deploy.

This was true in 2023. It is increasingly false. The market for agent deployment tooling has matured significantly. Many organisations are deploying their first production agents with relatively small technical teams, using platforms that abstract away most of the infrastructure complexity. The bottleneck today is more often process design and change management than engineering capacity.

When NOT to adopt AI agents – the honest assessment

There are genuine situations where AI agent adoption is premature or inadvisable:

When your business is in a phase where the core process itself is still being figured out. Agents are good at executing and optimising defined processes. They are poor at discovering what the process should be. If you do not know how your best salesperson would handle a complex account situation, an agent cannot know either.

When you operate in a heavily regulated industry with strict accountability requirements. Sectors like financial advice, healthcare diagnosis, and legal services have compliance frameworks that require clear human accountability for decisions. Deploying agents in ways that obscure that accountability creates regulatory exposure that outweighs the operational benefit.

When your data is not ready. This bears repeating because it is the single most common reason AI agent deployments fail. Agents trained on bad data, or operating without access to the right data, produce consistently unreliable outputs. No amount of sophistication in the agent architecture compensates for poor data infrastructure.

When your organisation lacks the change management capacity to support the transition. The technical deployment of an AI agent is often the easiest part. The harder work is retraining teams, redesigning roles, establishing new quality review processes, and building a culture comfortable with supervising AI outputs. Organisations without bandwidth for this will find that agents create friction rather than reducing it.

The outlook for the next 18 months

Several developments are likely to reshape the AI agents landscape significantly:

Standardisation of agent-to-agent communication protocols will accelerate multi-agent deployments. Right now, building systems where multiple agents coordinate requires custom integration work. As standards emerge, this becomes dramatically easier.

The arrival of agent marketplaces – curated, pre-built agents for specific business functions – will lower the barrier further. Rather than building from scratch, businesses will configure and deploy agents purpose-built for their use case.

Memory and context capabilities will improve, allowing agents to maintain longer-term awareness of business context, past interactions, and evolving priorities. This is what moves agents from useful tools to genuine business partners.

Regulatory frameworks will begin to catch up. The EU’s AI Act is already establishing obligations around high-risk AI uses. Businesses deploying agents now should be designing with compliance architecture in mind, not retrofitting it later.

The most important shift, however, will be cultural. As AI agents move from experimental to standard business infrastructure, the organisations with an advantage will not be those with the most advanced agents – they will be those where people have learned to work effectively alongside them.

Summary – five things to take from this article

First, AI agents represent a qualitatively different category from chatbots and automation tools. The strategic implications are substantially larger.

Second, the ROI opportunity is already real and measurable in sales, finance, customer support, engineering, and marketing – not theoretical.

Third, successful deployment requires data readiness, process clarity, and genuine investment in change management. Technical sophistication alone is insufficient.

Fourth, the differentiation window is real but closing. Organisations that are “monitoring the space” are already behind those treating it as an active strategic priority.

Fifth, human oversight is not optional. The businesses building the most effective AI agent systems are not removing humans from the loop – they are redesigning what humans do inside it.

If your organisation is evaluating where AI agents fit in your technology roadmap, the most useful starting point is not a vendor comparison. It is an honest audit of your data infrastructure, your most process-intensive functions, and your team’s capacity to manage the transition. That audit – not the technology itself – determines how quickly you can generate real value.